Agentic AI,

Artificial Intelligence & Machine Learning,

Litigation

Company Claims that Limits Triggered Federal Retaliation, Violating Free Speech Rights

Anthropic, a prominent artificial intelligence (AI) firm based in San Francisco, has accused the U.S. government of unconstitutional behavior, alleging that it has retaliated against the company for its expressed views on AI safety. This claim comes amidst a broader debate over the ethical implications of AI technologies and the role of government in regulating their applications.

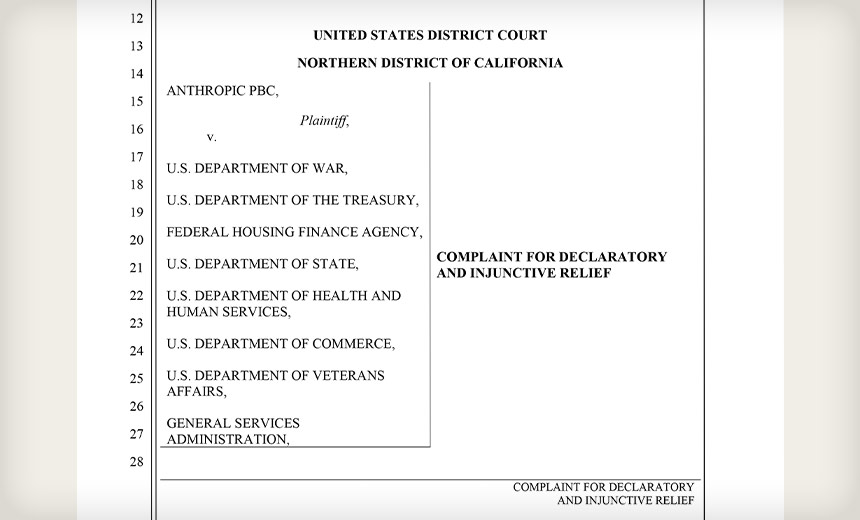

In a detailed 48-page lawsuit filed on a recent Monday, Anthropic articulates how the government imposed economic sanctions after the company articulated that its AI should not be employed for purposes such as autonomous lethal warfare or extensive surveillance on American citizens. Anthropic suggests that the government’s decision to preclude the firm from federal contracts stands in violation of constitutional laws, as well as statutory regulations.

In the lawsuit, Anthropic highlighted a directive from the President, which instructed all federal agencies to “IMMEDIATELY CEASE all use of Anthropic’s technology.” This directive followed Anthropic’s firm position against the use of its AI technology, named Claude, for military purposes, despite previous agreements with the Department of War that allowed for certain limitations.

On a recent Thursday, the Pentagon designated Anthropic as a “supply-chain risk,” effectively banning any defense contractors from utilizing the company’s technology in relation to military operations. The Department of War did not provide a comment regarding the lawsuit when approached by the Information Security Media Group, and Anthropic remained silent when asked for further remarks.

Anthropic Maintains Models Are Not Designed for Autonomous Weapons

Anthropic has further asserted that its models have never been validated or trained for the purpose of autonomous weaponry. The firm cautioned that AI-assisted surveillance could exacerbate errors, or lead to misuse, in ways that existing legal frameworks do not adequately address. During contract negotiations, however, the Department of War pressed for the removal of these usage restrictions, seeking to allow the military to deploy Claude in “all lawful uses.”

According to the company’s complaint, these usage restrictions arise from Anthropic’s unique insight into the risks and limitations of Claude, notably its potential for making mistakes and its considerable capability to analyze massive datasets, including sensitive information regarding American citizens. Anthropic argues that the government’s actions reflect punitive measures aimed at coercing the company to forego its principles, rather than a mere exercise in vendor selection.

Anthropic’s CEO was publicly labeled by the Department of War as being excessively “ideological” and even accused of perpetuating a “God-complex” that, according to the government, threatens national safety. The firm was presented with an ultimatum, demanding compliance with government demands or face significant consequences by a specified deadline.

The supply-chain risk designation allows the government to exclude contractors whose offerings are believed to pose risks associated with sabotage or compromise by adversarial nations. Anthropic argues that this designation was never intended to address internal disagreements over policy positions and maintains that the government has never claimed its technology is technically insecure. Instead, the designation is alleged to be a tactic in a contract dispute.

Due Process Concerns Over Supply Chain-Risk Label

Anthropic contends that the government failed to base the supply-chain risk designation on solid evidence of potential links to foreign adversaries, which they argue violates due process rights by imposing severe penalties without any prior investigation or hearing. According to Anthropic, the abrupt labeling as a national security risk has forced federal agencies to terminate contracts and has created a ripple effect questioning the legality of collaborations between military contractors and Anthropic.

The firm insists that such actions infringe upon its property and liberty interests, which encompass its reputation, business relationships, and future prospects. Anthropic has asserted that the process followed by the government lacked essential due diligence and disregarded established protocols set by Congress, branding the actions as arbitrary and capricious.

This designation as a national security risk carries significant weight, especially in an environment where the executive has issued threats of severe repercussions for non-compliance. The consequences of this label have already led to the cancellation of Anthropic’s contracts with the federal government, while also casting doubt on existing and potential partnerships in the private sector. The situation poses a dire challenge for Anthropic, jeopardizing millions of dollars in contracts and opportunities for the future.