Anthropic Appeals Supply-Chain Risk Designation, Cites Economic Threats

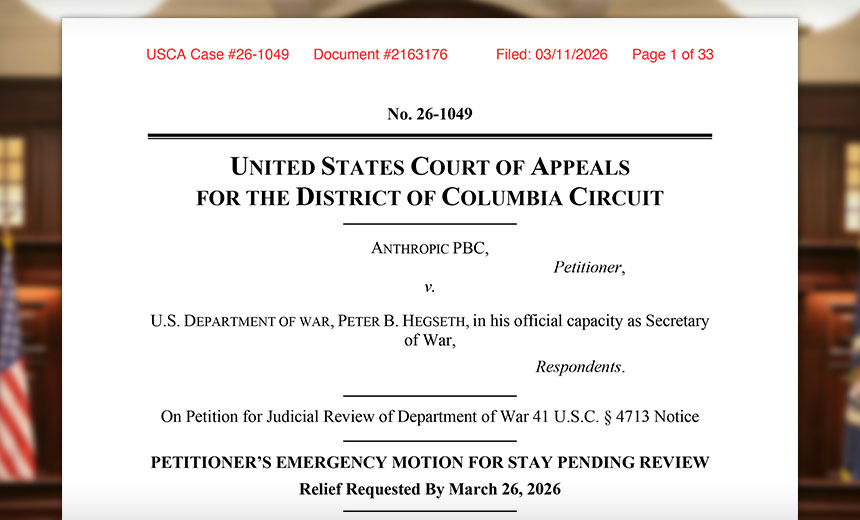

In a significant legal move, Anthropic, a leading artificial intelligence company based in San Francisco, submitted a request to the U.S. Court of Appeals late Wednesday, seeking a temporary stay against the U.S. Department of War’s designation of the firm as a supply-chain risk. This classification imposes serious restrictions on government contractors’ ability to utilize Anthropic’s Claude AI models, which could ultimately be detrimental to the company’s market position and financial stability.

Anthropic contends that the government’s designation effectively prohibits defense contractors from leveraging its AI technologies, leading to the potential blacklisting of the company from federal contracts. The firm alleges that this action is not only incendiary but also retaliatory—a response stemming from Anthropic’s firm stance against the use of its AI in autonomous lethal warfare or widespread surveillance of American citizens. In its detailed filing, Anthropic described the governmental decision as “unlawful,” “retaliatory,” and procedurally flawed.

The filing highlighted the unprecedented nature of the government’s designation against a prominent American tech firm. Anthropic asserts that this process originated not from a formal agency decision but rather from a public statement by Secretary Hegseth on social media, labeling Anthropic as a "Supply-Chain Risk." In a comprehensive 238-page document submitted at 11:15 p.m. on Wednesday, the company wrote, "This case involves extraordinary assertions of executive power."

In addition to its appeal, Anthropic initiated a lawsuit claiming that the government acted unconstitutionally by retaliating against the company for its expressed viewpoints on AI safety. The company argues that the government’s actions violate both constitutional rights and statutory law, which should provide protections against such punitive measures.

The harsh implications of the supply-chain risk designation cannot be overstated. According to Anthropic, the decision could cut off access to military contractors for their Claude AI models, threatening revenue that could amount to hundreds of millions, if not billions, of dollars. The Department of War has not responded to requests for comments regarding the lawsuit, nor has Anthropic issued any follow-up statements.

Legal Framework: Procedural Concerns and Due Process

Anthropic argues that the government’s designation process failed to meet established legal requirements typically surrounding such a significant action. The process for declaring a contractor a supply-chain risk should include comprehensive risk assessments, internal recommendations, and procedural safeguards, but Anthropic claims none of these were followed. Instead, the government allegedly issued a public denunciation first before attempting to rationalize it through a statutory notice days later, which Anthropic claims is a reaction driven by political motivations.

The filing takes issue with the Secretary’s decision, asserting that it contravenes Section 4713, which lays out strict criteria for officials seeking to remove companies from federal supply chains. According to the law, the Secretary can only invoke this authority after undertaking a risk assessment that identifies significant risks to a "covered procurement," and by affording the contractor a reasonable opportunity to respond to the evidence against them.

Anthropic maintains that it has a robust record of collaboration and extensive vetting with the U.S. government and its defense agencies, contributing to national security priorities, including AI safety research and operational models. Anthropic co-founder Jared Kaplan noted that the company’s systems are the first AI models used by U.S. military forces on classified platforms, underscoring the company’s alignment with national security goals.

Impact on Business and Future Operations

Anthropic has articulated that the government’s labeling as a supply-chain risk not only stigmatizes the company but is also already causing material disruptions to its business operations. Clients have begun to question the viability of continuing partnerships with Anthropic, leading to the cancellation of significant contracts and threatening the very foundation of the company’s revenue streams. The firm has stressed that due process requires transparency and a fair opportunity to contest any legal designation, which it claims has not been afforded.

Citing established precedents, Anthropic emphasizes that both the Supreme Court and lower courts have underscored the necessity for a transparent factual basis for any punitive action and a meaningful rebuttal opportunity—criteria that, according to Anthropic, have not been met in this instance.

The firm argues that absent a timely stay of the government’s designation, it faces irreparable harm, particularly as governmental actions typically enjoy sovereign immunity, preventing recovery of damages incurred. Anthropic stresses, "The loss of constitutional freedoms unquestionably constitutes irreparable injury," linking the damage not only to financial loss but also to constitutional rights related to due process and First Amendment freedoms.

Anthropic further contends that the Department of War itself has mandated that the company continue to provide its AI systems for several months during any transitional phases, casting doubt on the legitimacy of the government’s risk designation. The firm ultimately argues that staying the Secretary’s actions would impose no harm on the government and would merely restore the operability status that existed before the February 27 designation.

Conclusion

As the legal battle unfolds, the outcome will likely hinge on interpretations of administrative law, constitutional rights, and the balance between national security and free expression in the tech industry. Anthropic’s position highlights significant issues surrounding state power and the fragility of business relationships in the face of governmental designations, raising questions about due process and fairness in an increasingly complex technological landscape. The case is not merely a legal dispute but a broader commentary on the evolving relationship between government actions and corporate innovation in the age of artificial intelligence.