The adoption of generative AI technologies by organizations has raised concerns about the security of these tools. As a result, security teams are now focusing on how to secure AI tools. According to a recent survey conducted by Gartner, one-third of respondents reported either using or implementing AI-based application security tools to mitigate the risks associated with generative AI.

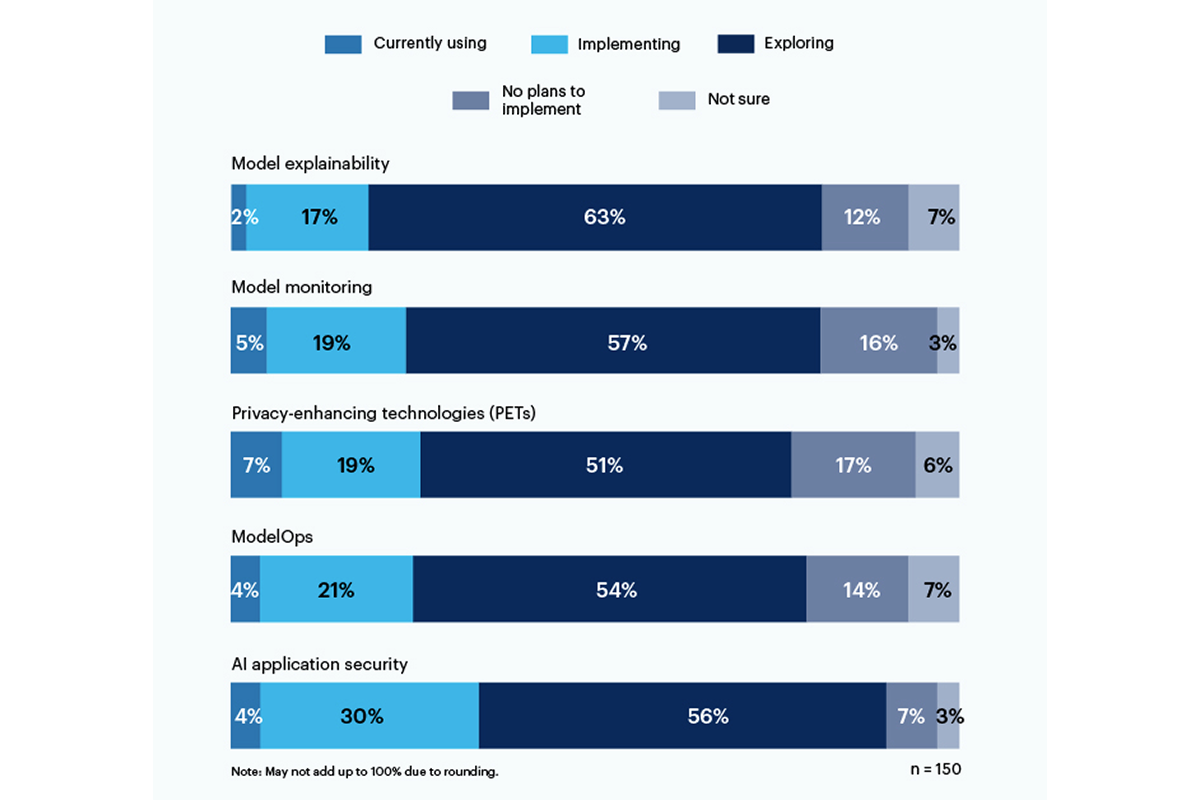

Among the various security measures mentioned in the survey, privacy-enhancing technologies (PETs) emerged as the most widely adopted, with 7% of respondents currently using them and 19% planning to implement them. PETs include techniques such as homomorphic encryption, AI-generated synthetic data, secure multiparty computation, federated learning, and differential privacy. However, it is worth noting that 17% of the organizations surveyed have no plans to implement PETs in their environment.

Another area of concern highlighted in the survey is the need for model explainability. Only 19% of respondents reported using or implementing tools for model explainability, but there is significant interest (56%) in exploring and understanding these tools to address the risks associated with generative AI. Gartner states that explainability, model monitoring, and AI application security tools can all contribute to achieving trustworthiness and reliability in enterprise AI models, regardless of whether they are open source or proprietary.

The survey also shed light on the specific risks that organizations are most worried about in relation to generative AI. The top concerns identified by the respondents include incorrect or biased outputs (58%), vulnerabilities or leaked secrets in AI-generated code (57%), and potential copyright or licensing issues arising from AI-generated content (43%). These risks highlight the need for transparency and better understanding of the data models used in generative AI systems.

A C-suite executive who participated in the survey expressed the challenges associated with the lack of transparency in data models, stating, “There is still no transparency about data models are training on, so the risk associated with bias and privacy is very difficult to understand and estimate.” This sentiment emphasizes the importance of addressing these concerns to ensure the responsible and secure use of generative AI.

In response to the growing need for security in AI, the National Institute of Standards and Technology (NIST) launched a public working group in June. The working group aims to address the question of how to secure AI tools based on NIST’s AI Risk Management Framework, which was released earlier this year. It is evident from the Gartner survey that companies are not waiting for NIST directives and are proactively taking steps to secure their AI tools.

As organizations continue to rely on generative AI technologies for various tasks, it is crucial to prioritize security measures to mitigate the risks associated with these tools. The adoption of privacy-enhancing technologies and the exploration of model explainability tools are steps in the right direction. By addressing concerns such as biased outputs, vulnerabilities in AI-generated code, and copyright issues, organizations can ensure the responsible and secure use of generative AI in their operations.