AI-Driven Exploit Development: Concerns Arise as Claude Opus Demonstrates Rapid Offensive Security

A recent experiment conducted by security researcher Mohan Pedhapati, known in the cybersecurity community as s1r1us, has highlighted the capabilities of Anthropic’s Claude Opus in accelerating offensive security work. This unveiling has reignited concerns surrounding the rapid evolution of artificial intelligence in the context of cyber exploits, particularly against well-established platforms like Google Chrome and its V8 engine.

The findings emerged shortly after Anthropic unveiled Claude Mythos Preview and Project Glasswing, an initiative aimed at enhancing cybersecurity measures. Pedhapati, who serves as the Chief Technology Officer at Hacktron, revealed that the experiment specifically targeted the Discord Desktop application, which was running on Chrome version 138. This version, an older build of Chromium, is significantly outdated compared to the latest upstream releases. The implications of utilizing older software are profound, as such versions are often more vulnerable to known exploits, enabling attackers to harness previously patched flaws effectively.

The experiment commenced with the identification of CVE-2026-5873, a critical out-of-bounds read and write vulnerability present in the V8 engine. Google addressed this bug in Chrome version 147.0.7727.55. As specified in the National Vulnerability Database (NVD), the flaw posed a significant threat, allowing remote attackers to execute arbitrary code within the isolated Chrome sandbox via a malicious HTML page. The report characterizes this flaw as a serious memory corruption issue in its own right.

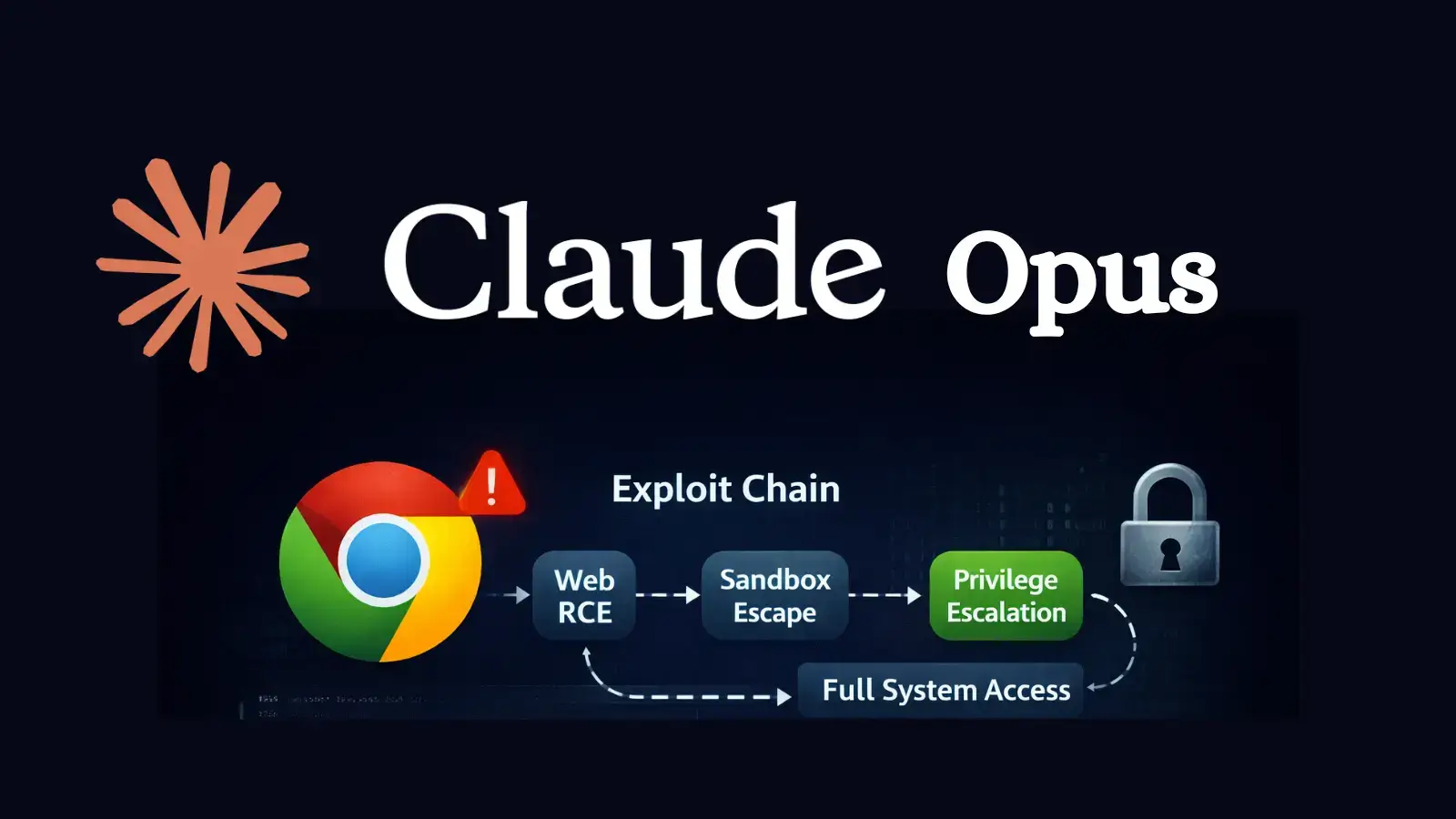

In a detailed account, Hacktron explained how Claude Opus leveraged patch information, combined with repeated debugging efforts, to transform this vulnerability into a functional out-of-bounds primitive. Following this, it utilized a disclosed bypass of the V8 sandbox, advancing towards a complete execution of arbitrary code. Although the technical pathways were intricate, the underlying principle remained straightforward: first attain memory access within V8, then breach the sandbox’s defenses, and finally redirect system execution to execute commands.

The practical demonstrations of the exploit’s success were noteworthy, as the proof-of-concept operated seamlessly on ARM64 macOS, invoking the Calculator application, a common target for researchers illustrating successful code execution.

However, what is particularly striking about this experiment is the methodology employed by Pedhapati and Claude Opus. The researcher reported that the entire process spanned approximately one week, entailing 22 interactive sessions with the AI and 27 unsuccessful attempts before achieving a working exploit chain. This effort consumed an impressive 2.33 billion tokens and 1,765 requests, costing $2,283 in API usage and demanding around 20 hours of human oversight. This indicates that while Opus showcased significant competencies, it operated more as a highly skilled yet erratic assistant rather than a fully autonomous hacker.

This nuance is critical for cybersecurity professionals to understand. The findings underscore that contemporary AI models, while not a substitute for skilled exploit developers, can substantially reduce the time required for transforming vulnerabilities into practical exploit chains. The concerns are amplified when considering that older software versions afford attackers prolonged access to known vulnerabilities.

Anthropic has echoed these concerns in its security communications, suggesting that the capabilities demonstrated by Claude Mythos Preview could rival even the most adept human experts in identifying and exploiting vulnerabilities. This advocacy for caution has led to the decision not to release Mythos broadly at this time. Instead, Anthropic has launched Project Glasswing, collaborating with industry giants such as AWS, Apple, Cisco, CrowdStrike, Google, Microsoft, Nvidia, and JPMorgan Chase. This initiative aims to harness advanced AI to secure critical software before malicious actors can exploit similar advantages.

In light of these developments, the actionable insights for security teams are evident. They must vigilantly monitor bundled Chromium in Electron-style desktop applications with the same intensity reserved for the main Chrome browser. The urgency of addressing patch delays is now underscored, as these delays may represent significant security exposures.

Ultimately, while AI is not poised to completely replace exploit developers, this experiment illustrates that it can undoubtedly expedite the efforts of skilled attackers, potentially making them more efficient, economical, and harder to detect. This evolving landscape necessitates a proactive approach from security professionals as they adapt to the burgeoning capabilities of AI in the realm of cybersecurity.